- Building a Scalable Backend with Express, TypeScript and Mongoose

- State Management Made Simple with Redux Toolkit in React

- How to integrate swagger with an ASP.NET MVC application

- Streamline API Development and Testing with Postman

- Microsoft Office 365 Tenant to Tenant Mailbox Migration

- BI Integration with Microsoft Dynamics AX

- BI Integration with Microsoft Dynamics GP

- BI integration with Microsoft Dynamics NAV

- BI Integration with Microsoft Dynamics SL

- Compliance Risk Management in Microsoft Dynamics AX

- Compliance Risk Management in Microsoft Dynamics GP

- Microsoft Dynamics AX CRM Functionality

- Quality Assurance in Microsoft Dynamics GP

- Quality Management Functionality in Microsoft Dynamics AX

- The 2 Es (Eco System and Evolution) in Android

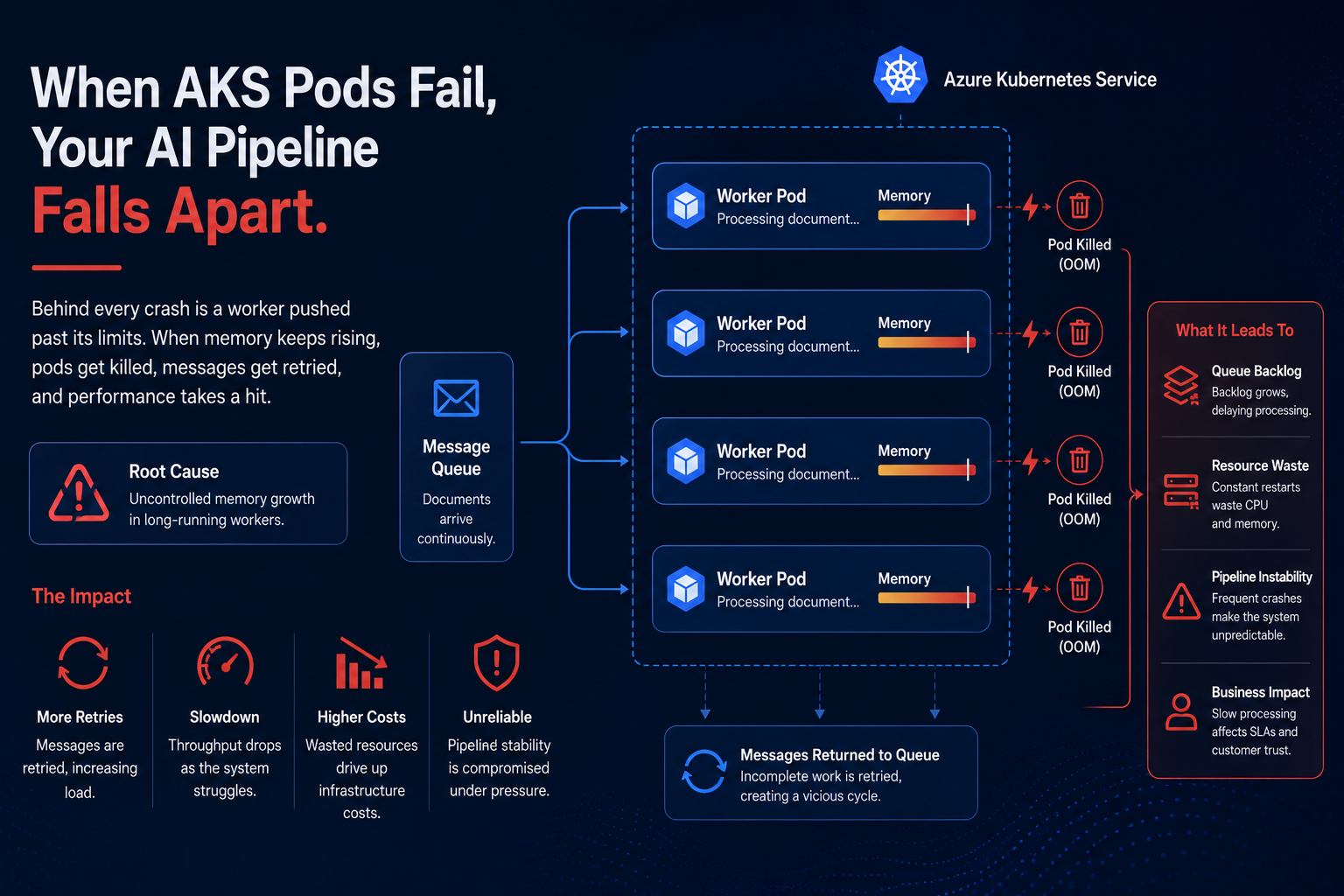

When Perfect Code Still Crashes: How We Stopped Kubernetes Pod Failures in Our AI Pipeline

Everything in our AI document processing system worked perfectly until real traffic arrived. During peak workloads, Kubernetes pods running on Azure Kubernetes Service began restarting repeatedly. The processing logic was stable, yet memory usage kept rising until pods were terminated.

There was no memory leak. Memory simply was not returned to the operating system quickly enough. After processing large documents, usage stayed elevated and gradually increased with every new task. Eventually, Kubernetes enforced memory limits and terminated the pod, triggering retries and creating additional pressure across the system.

Rather than redesigning the pipeline, we shifted lifecycle responsibility to the workers themselves. After completing and acknowledging a task, each worker evaluated its memory usage, runtime and processed message count. If predefined thresholds were reached, the worker exited gracefully before requesting another job. A lightweight supervisor immediately launched a fresh worker, keeping throughput uninterrupted.

The result was clear. Pod crashes disappeared, retries dropped significantly and memory behavior became predictable even during traffic spikes.

The key lesson is simple. Cloud reliability is not only about scaling infrastructure. True resilience comes from designing services that understand their own limits and restart themselves before failure occurs.

We use cookies to provide the best possible browsing experience to you. By continuing to use our website, you agree to our Cookie Policy